There is something quietly insulting about being told that coding is solved while you are still debugging a production incident.

The insult is not in the capability of the tools. The tools are impressive. It is in the framing. The framing implies that programming was primarily a throughput problem, that engineers were constrained by keystrokes rather than cognition, and that once token generation became cheap the discipline itself would begin to dissolve.

But if you have spent any serious time inside a real distributed system, you know that the failures that matter were never caused by slow typing. They were caused by violated invariants, mismatched assumptions about ordering guarantees, retries that amplified partial outages, and state that existed in two places when it was only supposed to exist in one.

Typing was never the bottleneck.

What AI has collapsed is friction. What it has not collapsed is complexity.

I. Jevons in the Codebase

In 1865, William Stanley Jevons observed something counterintuitive about coal. When steam engines became more efficient, coal consumption did not fall. It rose. Efficiency reduced cost, and lower cost expanded usage. This became known as Jevons Paradox.

Software is now experiencing its Jevons moment.

As the marginal cost of generating code approaches zero, we do not write less software. We write more. Internal tools that once required justification now appear in an afternoon. Microservices proliferate because scaffolding them is conversational. Integrations multiply because adapters can be drafted faster than meetings can be scheduled.

The system does not shrink. It expands.

And with expansion comes surface area. Each new service introduces network boundaries, serialization contracts, partial failure modes, and implicit consistency assumptions. Each new feature introduces state transitions and new invariants that must hold across code paths no one has fully simulated.

Cheap code produces expensive coherence.

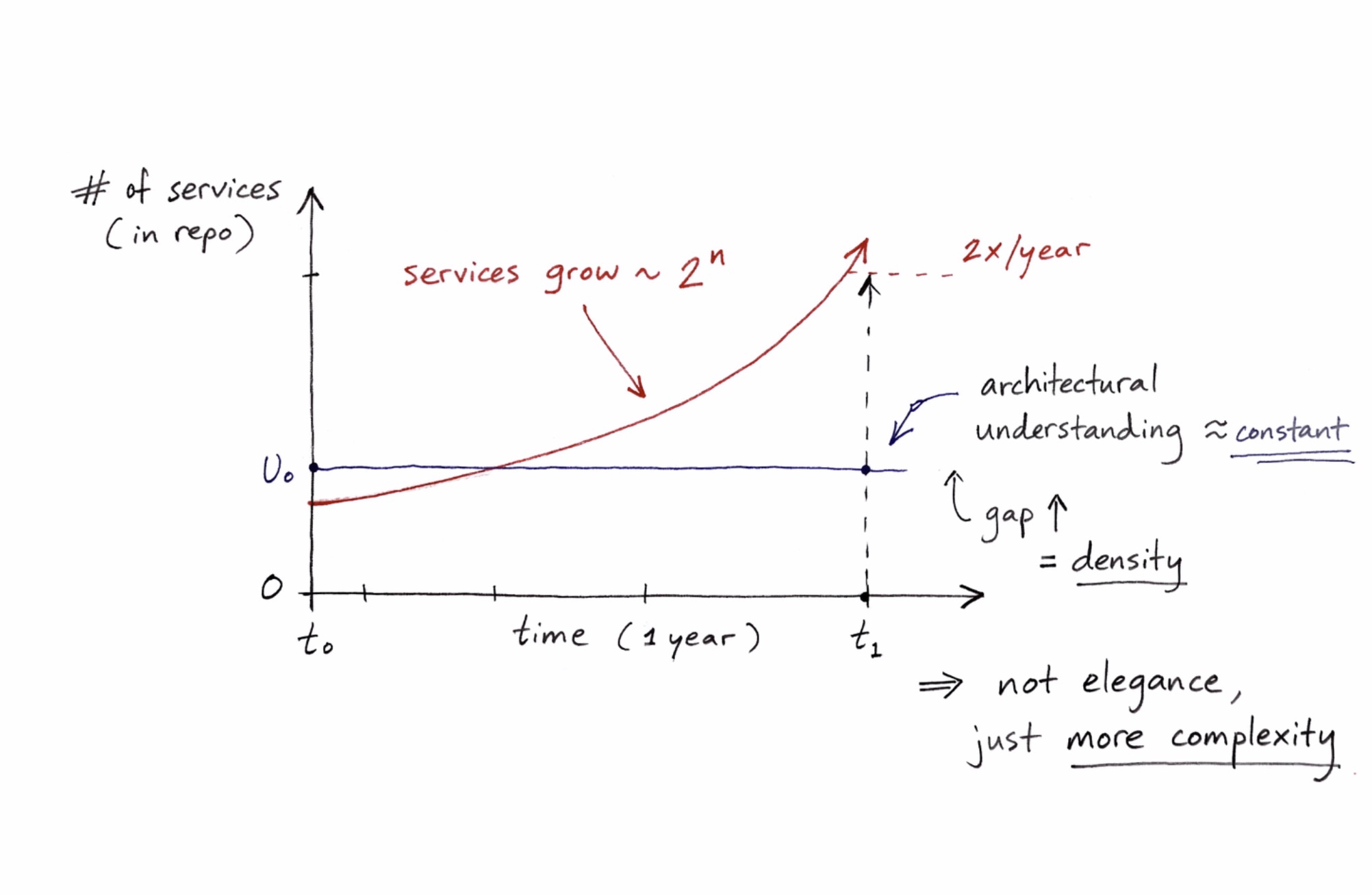

The paradox is not abstract. It is visible in repositories where the number of services doubles in a year while architectural understanding remains constant. The result is not elegance. It is density.

II. The Jagged Frontier

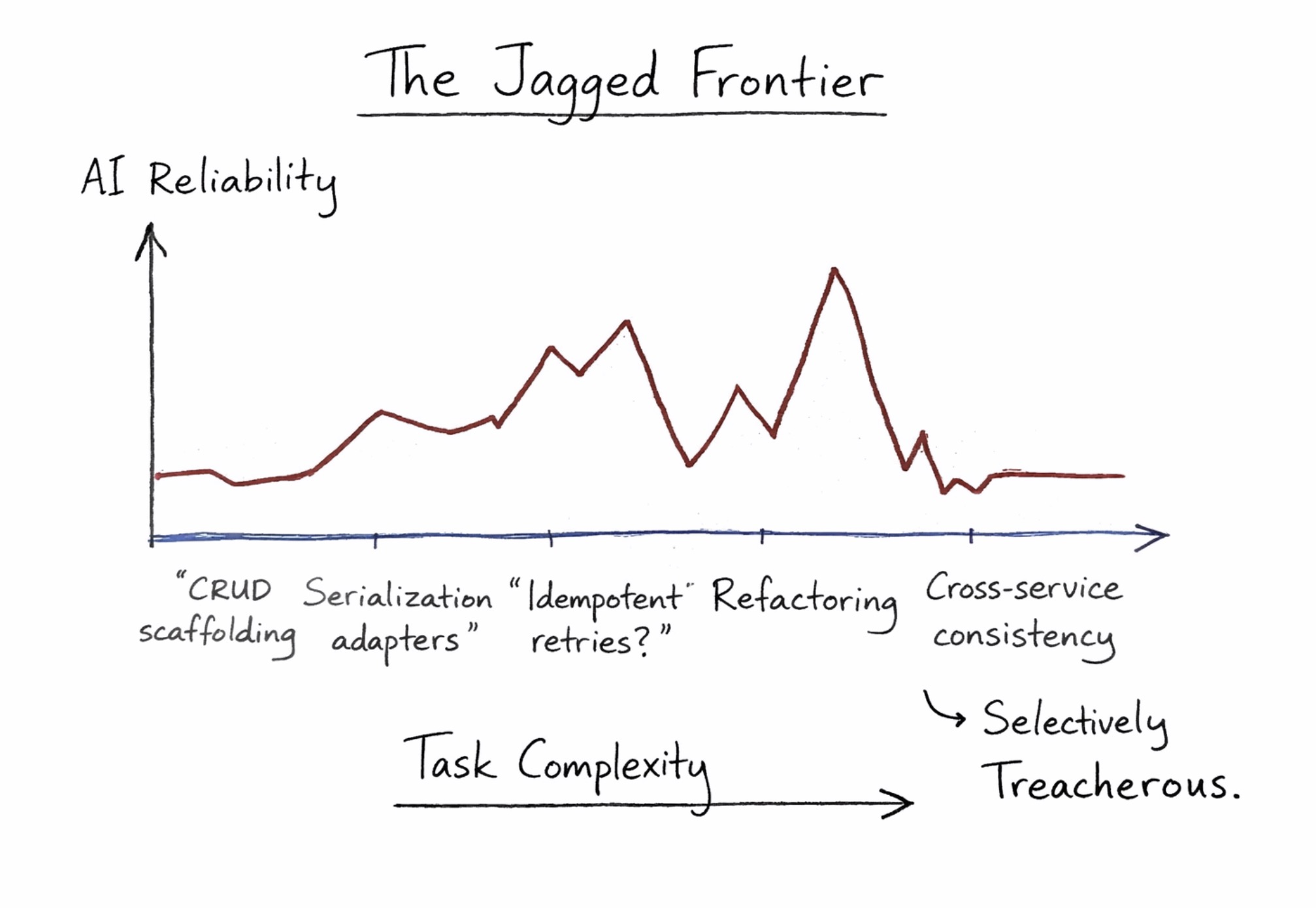

Ethan Mollick describes AI capability as a “Jagged Frontier.” Progress is not smooth across domains. Systems perform astonishingly well in some areas and fail abruptly in others.

This jaggedness is easy to observe in engineering workflows.

Large language models excel at boilerplate, translation, refactoring isolated functions, and scaffolding predictable patterns. They compress repetitive labor. They eliminate drudgery.

But they struggle in domains that require global reasoning over implicit constraints. Complex state machines. Concurrency semantics. Subtle distributed consistency guarantees. Security boundaries shaped by historical incidents.

This is not because they are unintelligent. It is because they are statistical systems operating over tokens, not causal models of your specific architecture.

A model can generate a plausible retry mechanism. It cannot know that, in your system, retries must be idempotent because of an eventual consistency tradeoff introduced two years ago during a database migration.

The frontier is jagged because the local solution can be fluent while the global system becomes fragile.

If engineers mistake local fluency for systemic understanding, they begin to outsource judgment precisely where judgment matters most.

III. Invariants Are the Real Architecture

Ask any engineer who has survived a serious outage what went wrong, and the answer rarely involves syntax. It involves a broken assumption.

An invariant is a guarantee that must always hold. That a financial ledger balances. That a transaction is atomic. That a write either fully commits or fully fails. That a request is idempotent under retries. That a piece of state has a single authoritative owner.

These guarantees define the skeleton of a system.

Distributed systems theory has been obsessed with invariants for decades because they are where complexity lives. The CAP theorem does not describe syntax. It describes tradeoffs between consistency, availability, and partition tolerance. Lamport clocks and vector clocks do not help you type faster. They help you reason about causality in a system where time is not globally agreed upon.

Isolation levels in databases are not performance features. They are statements about which anomalies you are willing to tolerate.

None of this disappears because code generation becomes easier.

If anything, it becomes more critical to formalize these invariants explicitly. A language model can generate an implementation of a payment workflow. It cannot intuit that double charging a user is existentially unacceptable unless you encode that constraint.

This is why serious test suites become architectural instruments. A meaningful test is not checking that a function returns 200. It is encoding a domain invariant. It is stating that under retries, under concurrency, under partial failure, this guarantee must hold.

When AI generates code to satisfy such tests, it operates inside boundaries you define. Without those boundaries, it optimizes for plausibility, not correctness.

Implementations are interchangeable. Invariants are not.

IV. The Conservation of Understanding

There is another constraint at play, one less discussed but more important than generation speed. Systems are limited not by the amount of code they contain, but by the amount of accurate mental simulation their designers can sustain.

Call this the conservation of understanding.

When writing code by hand, you rehearsed it. You traced execution paths. You imagined the unhappy path. You noticed where state mutated in ways that felt slightly dangerous. The friction was informative.

AI decouples artifact from rehearsal.

You describe intent. The implementation appears. It compiles. It looks correct. But unless you deliberately re-simulate the system, you have skipped the cognitive work that builds intuition.

The surface area of your system grows faster than your understanding of it.

This mismatch does not produce immediate catastrophe. It produces slow drift. A small assumption here. A copied pattern there. A retry loop added without deep thought. Over time, the system becomes statistically coherent and semantically brittle.

Understanding cannot be scaled as cheaply as code.

V. Architectural Gravity

In every mature system, architectural knowledge condenses.

A small group understands why a specific service must use strong consistency. A small group remembers that a particular ordering guarantee was introduced after a painful incident. A small group can trace a request across multiple services and predict where it might degrade.

This concentration is structural. Call it architectural gravity.

As AI increases local generation speed, architectural gravity intensifies. The burden of coherence grows. Someone must reconcile the expanding surface area with the fixed constraints of reality.

If organizations interpret AI leverage as justification to eliminate junior roles or compress apprenticeship, they erode the pipeline that produces future architects. System intuition forms through debugging real failures, through understanding why a theoretically elegant abstraction collapsed under load.

That sediment cannot be skipped.

The risk is not that AI replaces engineers. The risk is that organizations hollow out the layers that cultivate judgment while celebrating short-term velocity.

VI. We Were Never Typists

The persistent misunderstanding in this moment is that engineers were primarily typists.

Typing was incidental.

The work has always been architectural. Deciding where state should live. Defining consistency boundaries. Choosing between eventual and strong guarantees. Designing failure semantics that degrade gracefully instead of catastrophically.

These are tradeoff decisions.

They cannot be reduced to token prediction.

AI multiplies output. It reduces the cost of experimentation. It accelerates scaffolding. All of this is valuable.

But the scarce resource has migrated upward.

Scarcity is now in judgment. In boundary design. In invariant formalization. In mapping the jagged frontier without confusing statistical fluency for architectural safety.

Coding is not solved.

Syntax is commoditized.

Complexity remains.

And complexity, particularly in systems where state, time, and failure intersect, demands something AI does not possess: lived context, accumulated intuition, and the willingness to sit with a system long enough to feel where it might fracture.

The engineers who thrive will not be the fastest prompters. They will be the ones who understand that coherence does not emerge from generation speed, but from constraint, context, and deliberate architectural design.

Architecture has not become easier. It has merely become more necessary.

VII. The Discipline of Orchestration

If typing is no longer scarce, and output is no longer scarce, then the question becomes: what must be scarce on purpose?

The answer is constraint.

The forward path is not to resist AI, nor to surrender to it, but to embed it inside deliberate architectural discipline.

This begins with a reframing. AI is not a co-architect. It is an implementation amplifier. It accelerates local production. It does not own global coherence. The orchestrator does.

Which means the workflow inverts.

Instead of starting with implementation, you start with invariants. You define what must always be true before any code exists. You articulate the business guarantees, the failure semantics, the consistency model you are willing to tolerate. You write tests not to validate syntax, but to encode architectural commitments.

You formalize boundaries first.

Only then do you generate.

In this model, AI is not a replacement for engineering thought. It is a constrained executor operating within a corridor you designed. It produces candidate implementations that must satisfy explicit contracts. It can refactor locally. It can scaffold modules. But it does not get to redefine invariants.

The tests become the leash.

This is not nostalgia for test-driven development. It is structural necessity in an era where generation is cheap. If you do not externalize your constraints, the system will drift toward statistical plausibility instead of domain correctness.

VIII. Context as Infrastructure

The second shift is to treat context as infrastructure.

Most AI usage today is zero-shot improvisation. A vague prompt. A plausible answer. A merge request that “looks right.”

But models are context-sensitive systems. If you do not feed them architectural decisions, naming conventions, domain definitions, and explicit constraints, they will optimize for the average pattern across their training distribution.

Which means the solution is not better prompts. It is better documentation.

Version-controlled architectural decisions. Explicit domain language. Clearly defined service ownership. Written explanations of tradeoffs between consistency and availability.

In other words, make the system legible before you automate its expansion.

Context must precede generation.

IX. Designing for the Jagged Frontier

The Jagged Frontier is not going away. It will shift, but it will remain uneven.

So orchestration requires mapping the frontier deliberately.

Low-risk, repetitive, well-understood patterns can be delegated aggressively. Boilerplate, adapters, predictable CRUD logic. Let the machine compress that.

High-risk domains remain human-owned.

Concurrency primitives. Financial logic. Authentication and authorization flows. Cross-service invariants. Schema migrations that cannot be rolled back.

This is not about mistrust. It is about risk modeling.

You do not let statistical fluency design your consistency guarantees.

The orchestrator’s skill becomes the ability to partition the system along risk boundaries and decide where leverage is safe.

X. Apprenticeship by Design

If AI compresses implementation, organizations must deliberately preserve apprenticeship.

This means juniors do not disappear. They change shape.

Instead of spending months wiring boilerplate, they spend months studying system invariants, debugging generated code, tracing distributed flows, and learning why a seemingly correct implementation subtly violates domain constraints.

They learn architecture earlier.

Because the future shortage will not be in people who can prompt. It will be in people who understand why the prompt’s output is subtly wrong.

XI. The New Scarcity

What we are witnessing is not the death of software engineering. It is the relocation of scarcity.

Syntax is abundant. Surface area is abundant. Plausibility is abundant.

What is scarce is disciplined judgment. Constraint design. The ability to encode invariants as executable contracts. The patience to re-simulate a system even when a model has already written the code.

The orchestrator is not the fastest producer. The orchestrator is the slowest thinker in the room.

Not slow in intelligence. Slow in refusal. Slow to accept an implementation until its invariants are explicit. Slow to expand surface area without first strengthening boundaries.

AI makes building easier. It does not make being correct easier.

And so the solution forward is not technological. It is architectural. It is procedural. It is cultural.

We stop asking how fast we can generate. We start asking how deliberately we can constrain.

Typing was never the bottleneck. Understanding was. Now that typing is cheap, the cost of misunderstanding is higher than ever.

And the only sustainable response is orchestration.